Distillation as

Divergence Minimization

Compress a slow multi-step teacher into a few(or one)-step student.

Masked Diffusion Models (MDMs) operate on discrete tokens — no PF-ODE, no score function. Existing distillation breaks. Two unique challenges:

Key insight: minimize $D_h(r \,\|\, 1)$ — match the density ratio to $\mathbf{1}$, not distributions directly:

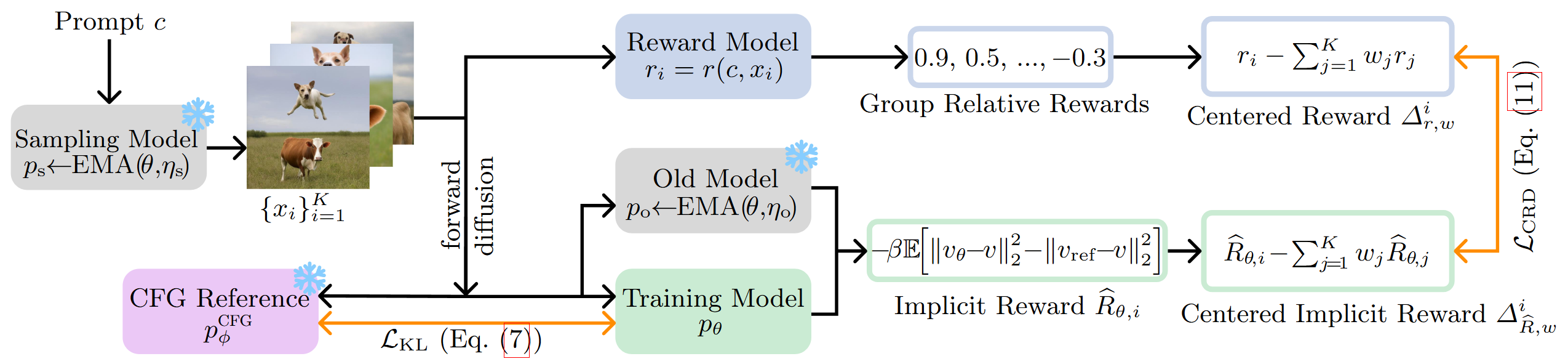

Same framework — only the target changes. Instead of a teacher, it encodes a reward:

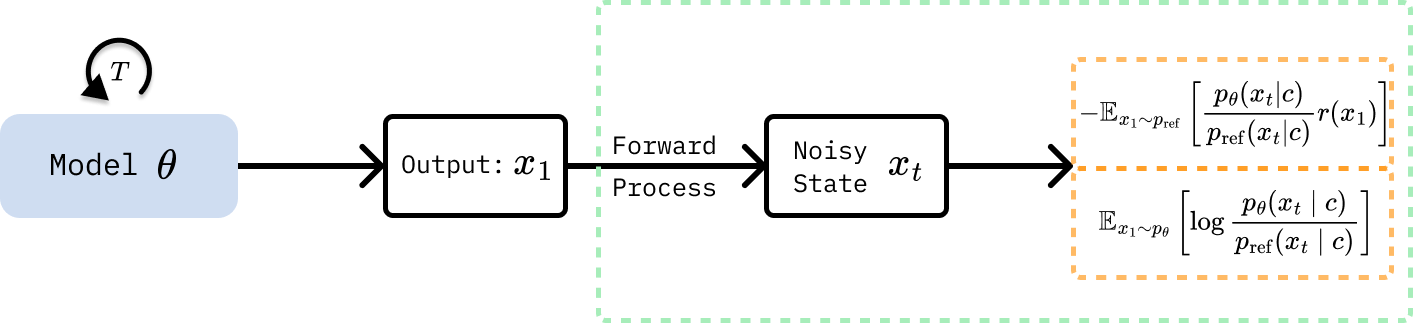

Substituting the Boltzmann target into the reverse-KL divergence and expanding:

Diffusion ELBO makes this tractable: $\log(p_\theta/p_\text{ref})$ ≈ difference in denoising losses at each noise level $t$ — a weighted score-matching loss.

Both paradigms minimize $D(p_{\text{target}} \,\|\, p_{\theta})$ — they differ only in what the target means.

| Method | Target $p_{\text{target}}$ | Divergence $D$ |

|---|---|---|

| Di[M]O 2503.15457 | $p_{\text{teacher}}(x_1 \mid c)$ | Gen. Jeffrey Div. |

| Di-Bregman 2510.16983 | $p_{\text{teacher}}(x_1 \mid c)$ | Bregman family |

| CRD 2603.14128 | $p_{\text{ref}} \cdot \exp\!\left( r(x_1) \right)$ | Reverse KL |